- Home

- Weddings

- Portraits

- Journal

- Contact

- Stick figure animator on scratch

- Inky deals coupon code

- Choose paintbrush color in photoshop elements for mac

- Sims 3 couple poses talking

- Microsoft toolkit for windows 10 activation

- Pcsx2 download mac

- Free er diagram tool er assistant

- Free hard drive cleaner for windows 7 pro

- Extract zip file mac windows

- Adobe master collection crack dmg torrent

- How do you create a quick part in word

- Problems with imazing software

- Thor 2011 full movie hindi dubbed

- Deadpool full movie vodlocker

- Can i recover deleted files from trash on mac

- Crazy custom fucking waw zombie maps

- Synology camera license wtf

- Ezdrummer midi controller stops working

- Keka for windows 7

- Plex media player android

- Adobe acrobat xi professional v11-0-14 multilingual-p2p

- Skype keeps freezing up

- Download java for mac os x keeps popping up

- The sims 4 reloaded crack torrent

- Lynsay sands argeneau series torrent download

- Canon ip3000 ink

- Yamaha usb midi driver windows 10 64 bit

- Cloudberry server backup to local drive access path denied

- Epson stylus photo r1800 tech support

- #CLOUDBERRY SERVER BACKUP TO LOCAL DRIVE ACCESS PATH DENIED INSTALL#

- #CLOUDBERRY SERVER BACKUP TO LOCAL DRIVE ACCESS PATH DENIED WINDOWS#

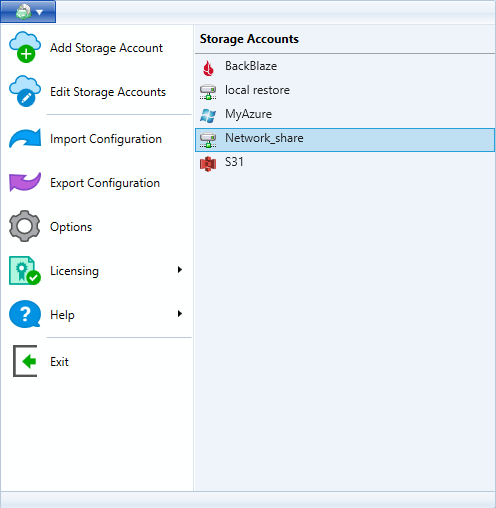

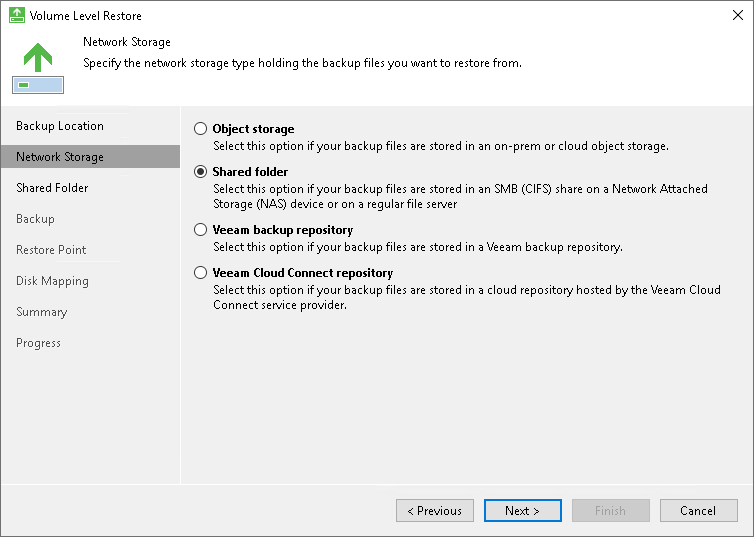

– You can see the available volumes in Windows explorer or by running this command in Powershell:Īdd VHD disks to the VM for the CloudBerry Drive cache: This is the container we created in step 3 above: – Under the Mapped Drives tab, click Add, type-in a volume label, click the button next to Path, and pick a Container. – Under the Storage Accounts tab, click Add, pick Azure Blob as your Storage Provider, enter your Azure Storage account name and key: – Back in the Azure VM, right-click on the Cloudberry icon in the system tray and select Options: – In the Azure Management Portal, obtain your storage account access key (either one is fine): – Run CloudBerryDriveSetup, accept the defaults, and reboot.

#CLOUDBERRY SERVER BACKUP TO LOCAL DRIVE ACCESS PATH DENIED INSTALL#

– Install C++ 2010 圆4 Redistributable pre-requisite: To install CloudBerry Drive on an Azure VM: Using this tool we get 128TB disk suggesting an allocation unit size of 32KB. Microsoft suggests that the maximum NTFS volume size is between 16TB and 256TB on Server 2012 R2 depending on allocation unit size. This approach has the 500TB Storage account limit which is adequate for use with Veeam Cloud Connect. Use a 3rd party tool such as Cloudberry Drive to make Azure block blob storage available to the Azure VM.There’s a maximum of 5TB capacity per share, and a maximum of 1TB capacity per file.The shares are not persistent although we can use CMDKEY tool as a workaround.There’s a couple of issues with this approach: Map drives to a number of Azure File SMB shares.We’ll have to use an expensive A4 sized VM, that has unneeded RAM and CPU cores.There’s a couple of issues with this approach: There’s a number of ways to make Azure storage available to a VM in Azure: There are better ways to copy data within the same Azure Storage account that are much more efficient and much less costly, such as instantaneous shadow copies.ĬloudBerry Drive Server for Windows Server caches files locally which makes it not suitable for use on Azure VMs.The copy incurs transnational, IOPs, and bandwidth charges on an Azure VM unnecessarily.Which makes any other task on the VM like another backup job not practically possible even if it is a different backup job is using other unlocked files, because CloudBerry is using up all available IOPS on the VM for hours or even days The 2nd copy uses a great amount of read IOPS from the local drive (Page Blobs), and write IOPS to the destination Block Blob storage.For example, if the Veeam cloud backup job successfully backed up 10 out of 12 VMs, and we retry the remaining 2 VMs, the job will fail since the destination file in Azure is locked by CloudBerry This makes the destination file in Azure block blob storage locked and unavailable for many hours during that 2nd copy process.CloudBerry Drive then takes the uploaded file from the cache folder and copies it to the Azure block blob storage account.It puts a file size limit equivalent to the maximum amount of space on the local drive used for CloudBerry caching.The point of using CloudBerry Drive is to be able to access Azure block blob storage with has a 500 TB maximum per storage account. An Azure VM (as of ) can have a maximum of 16 TB of local storage which is implemented as 16x 1TB VHD files (page blobs). It defeats the purpose of using CloudBerry in the first place.According to CloudBerry support this is a must and cannot be turned off. However, digging deeper into how CloudBerry drive works showed that CloudBerry Drive caches each received file to a local folder on the VM. Specifically, I’m testing Veeam Cloud Connect with Azure, which allows for off-site backup to Azure. The use case I was after is the ability to upload large files from on-prem servers to Azure VMs.

I have examined 10 different tools to perform this task, and CloudBerry drive provided the most functionality. CloudBerry Drive Server for Windows Server is a tool by CloudBerry that makes cloud storage available on a server as a drive letter.